In the video production industry that has grown by leaps and bounds in recent years, AI is used to improve and speed up video production workflows, saving professionals time and money and allowing them to create higher-quality final videos.

The rest of this article will look at how organizations can use AI strategically to transform the video production workflow, driving higher customer satisfaction and deeper profits.

Table of Contents

How Does AI Work?

Before AI, media production was often a long and tedious process that could take weeks or even months – due to most production tasks needing manual execution. However, developments in AI technology have dramatically changed the video production industry, enabling the creation of a higher-quality final video in a fraction of the time it used to take.

In 2022, Grand View Research reported that AI in the Media and Entertainment sector was worth nearly $15 billion, with a whopping $100 billion estimated for 2030. If this astronomical growth represents skyrocketing industry demand, it is critical to understand the mechanisms driving this demand.

AI learns through trial and error, using algorithms and deep learning. Trial and error allows the AI to interact with its environment, observe the results, learn from its mistakes, and grow more efficient over time.

AI’s primary learning process is split into three different methods used explicitly in machine learning;

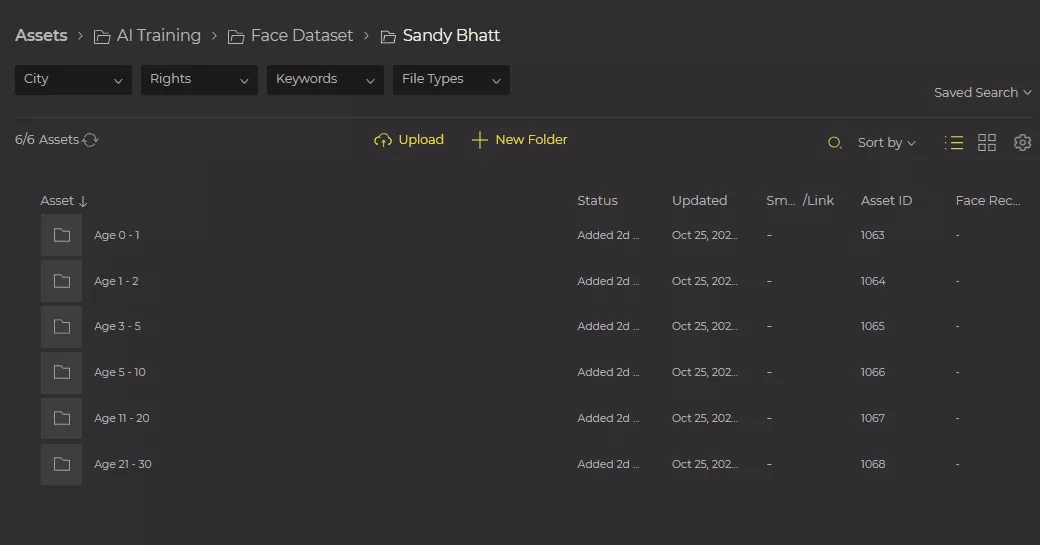

- Supervised Learning: This is when AI is trained on data sets and given specific tasks to complete. For example, Evolphin Zoom MAM allows users to train its AI face recognition engine to automatically recognize all matching faces across millions of images. This enables Zoom MAM users to save time otherwise spent manually looking through images to find characters’ faces for their video projects.

- Unsupervised Learning: For this, AI uses large amounts of data to recognize patterns and identify significant concepts. Examples include anomaly detection, association rule learning, and metadata application. For example, Evolphin Zoom MAM has out-of-box metadata fields that are automatically populated and imported into the system.

- Reinforcement Learning: Here, AI agents learn from their environment by taking actions, receiving rewards, and adjusting their behavior accordingly. Reinforcement learning algorithms utilize a trial-and-error approach to maximize rewards and minimize punishment until reaching an optimal level.

Once AI learns through these methods, it can recognize patterns, create rules and strategies, and use feedback from its environment. This enables AI to refine its processes for completing tasks, reach proficiency, and improve continuously.

Where does AI fit into the video production workflow?

By taking a deeper look into the role of AI and video production, it’s easy to find practical uses that demonstrate why it is so transformative to the media industry.

Metadata management

One of the biggest ‘time thieves’ of the video editing process is the task of tagging and organizing the metadata that comes with each video or image. Media managers rely on metadata to index, search for, and locate relevant assets. Without metadata, they would have to sift through hundreds, if not thousands, of terabytes worth of files to find what they need.

As soon as tags and groups are created for metadata, a fresh batch of unorganized data streams in, resulting in a never-ending battle for data organization. This is where AI/Machine Learning takes over the heavy lifting, performing initial metadata extraction and tagging project files without requiring human effort.

Discover on ingest

Evolphin’s Video FX module boasts an ingest server that can be personalized to copy a video proxy or an image to AI services like Google Video Intelligence or Amazon Rekognition API. Whenever you upload new media, these APIs can take your video proxy or an image, run an in-depth analysis, and generate metadata in a machine-readable format.

Once this process is complete, Evolphin Zoom MAM uses its API to automatically apply metadata tags in a custom group. This keeps machine-generated metadata distinct from human-curated metadata.

This mechanism integrates with Evolphin’s data migration tools, making millions of assets automatically discoverable soon after ingesting raw footage into the Zoom MAM platform. For historical data previously stored in LTO tapes, hard drives, etc., the ability to search inside video archives to find specific images in the blink of an eye is a game-changing money maker.

Analyze on-demand

Alternatively, users can generate metadata on demand using Evolphin Zoom Asset Browser. This feature is helpful for those who prefer to use an AI learning service of their choice, such as Google Video Intelligence, Valossa, or Amazon Rekognition API. They simply right-click on any file to automatically send a proxy of the asset to their preferred AI learning engine.

Like all Evolphin Zoom MAM’s integrations, the entire process runs in the background, freeing users to perform other tasks. Zoom MAM tags and places the generated metadata into easily discoverable, premade metadata fields, making it possible to scan archives for relevant clips by face, logos, color, and many more tags.

Speech-to-text and auto-captions

AI can be used to help with speech-to-text and auto-captions by analyzing audio recordings and generating text based on the audio transcription – all with better accuracy and speed. AI incorporates machine learning algorithms to understand speech patterns better and generate more accurate captions based on the latest language technologies.

Create automated rough cuts

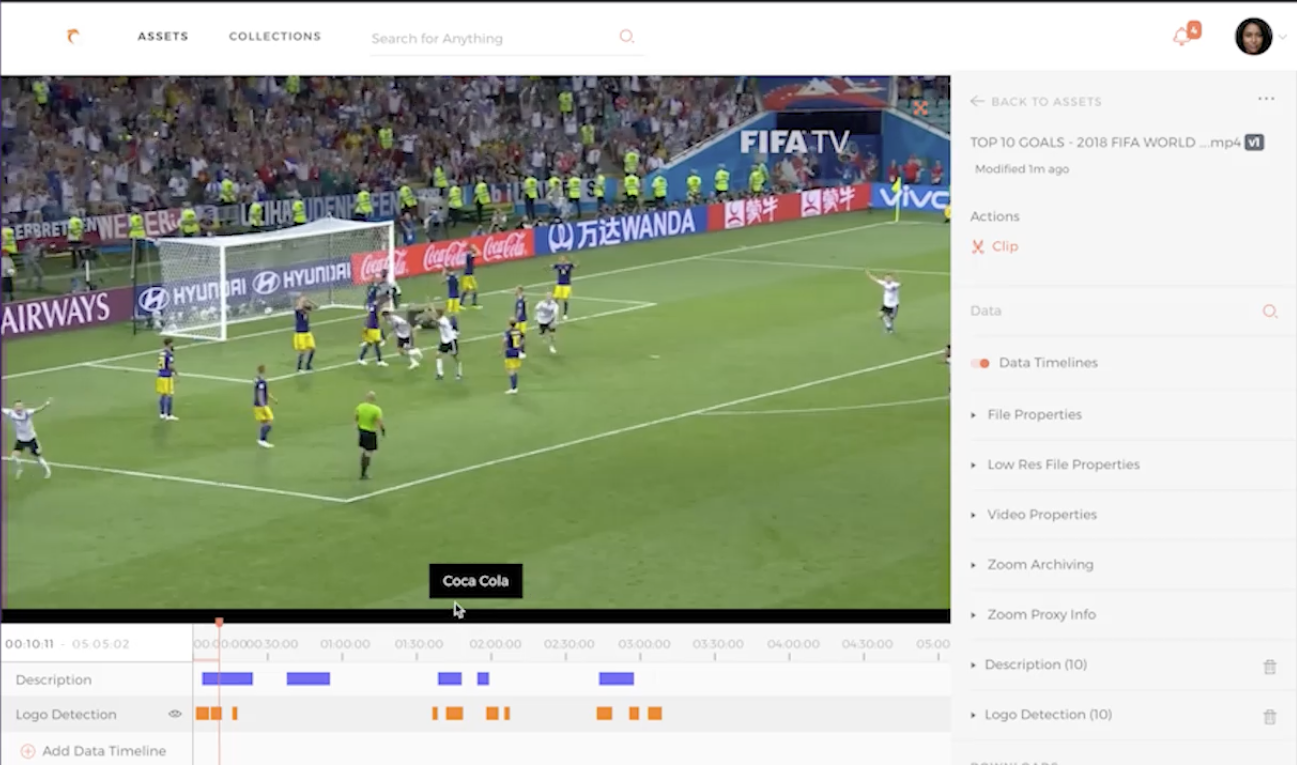

While AI-powered face recognition, and logo detection capabilities save video editors a significant amount of time, the post-production workflow cannot be truly optimized if these capabilities are left out of the editing process. Next-generation MAM systems like Evolphin Zoom MAM use these deep integrations to help video producers automatically cut footage without needing to manually scrub through long videos.

In practice, Evolphin Zoom AI automatically marks (and cuts) ‘in’ and ‘out’ points with relevant points using logo detection, celebrity face recognition, and other inference rules. This mechanism enables a well-orchestrated and streamlined video production workflow where producers can easily auto-select preferred clips to create rough cuts and send them to editors as Final Cut Pro XML sequences.

Create custom AI rules

Beyond commonly applied AI functions, Evolphin Zoom MAM enables users to create custom automated sequences using AI. For example, users can scrub files to extract and send images of specific celebrities to the marketing department. Or upload media containing a brand logo for licensing purposes.

Getting the best results from AI with your MAM

Evolphin Zoom MAM is the best AI-powered solution for transforming your post-production workflow

MAM system can help ensure consistency and increase accuracy, especially when supervised by humans.

Evolphin Zoom MAM has proven to be a critical tool in the video post-production process of renowned organizations like InterMilan FC, Mercedes, WorldVision, KQED, etc.

By automating mundane tasks and speeding up production times, Evolphin Zoom MAM has unshackled the video production process of these organizations from dependence on manual labor to increase their profitability. For organizations looking to leverage the power of AI to gain a competitive advantage, Evolphin Zoom MAM is a strategic investment that directly impacts both the quality and speed of content output.

Evolphin Zoom MAM is the best AI-powered MAM for your organization, but that’s not all. Evolphin Zoom offers much more benefits than competing solutions. We don’t stop putting the power of AI in your hands, but we also provide a working solution for ingesting, retrieving, tracking versions and integrating with your critical production stack.

With the technical setup, consultations, and ongoing technical support included as part of the package, adopting Evolphin Zoom is easy as turning on a light switch.

Contact us today if you’re interested in learning how Evolphin Zoom MAM’s AI capabilities can transform your post-production process.